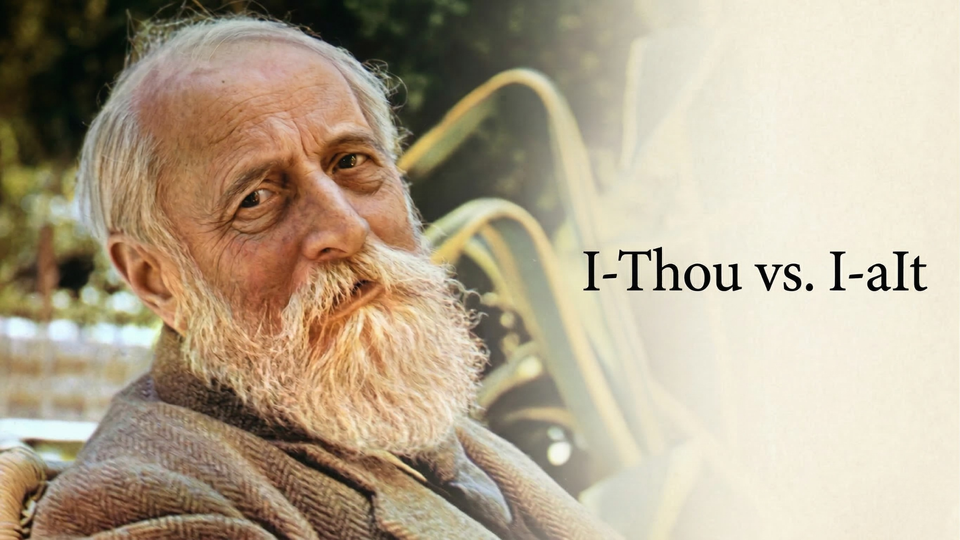

I–Thou vs. I–aIt

On Relationship, Language, and the Age of Artificial Intelligence

by Scott Holzman, 2026

This essay draws on and consolidates a five-part newsletter series originally published at sholzman.com in the summer of 2025.

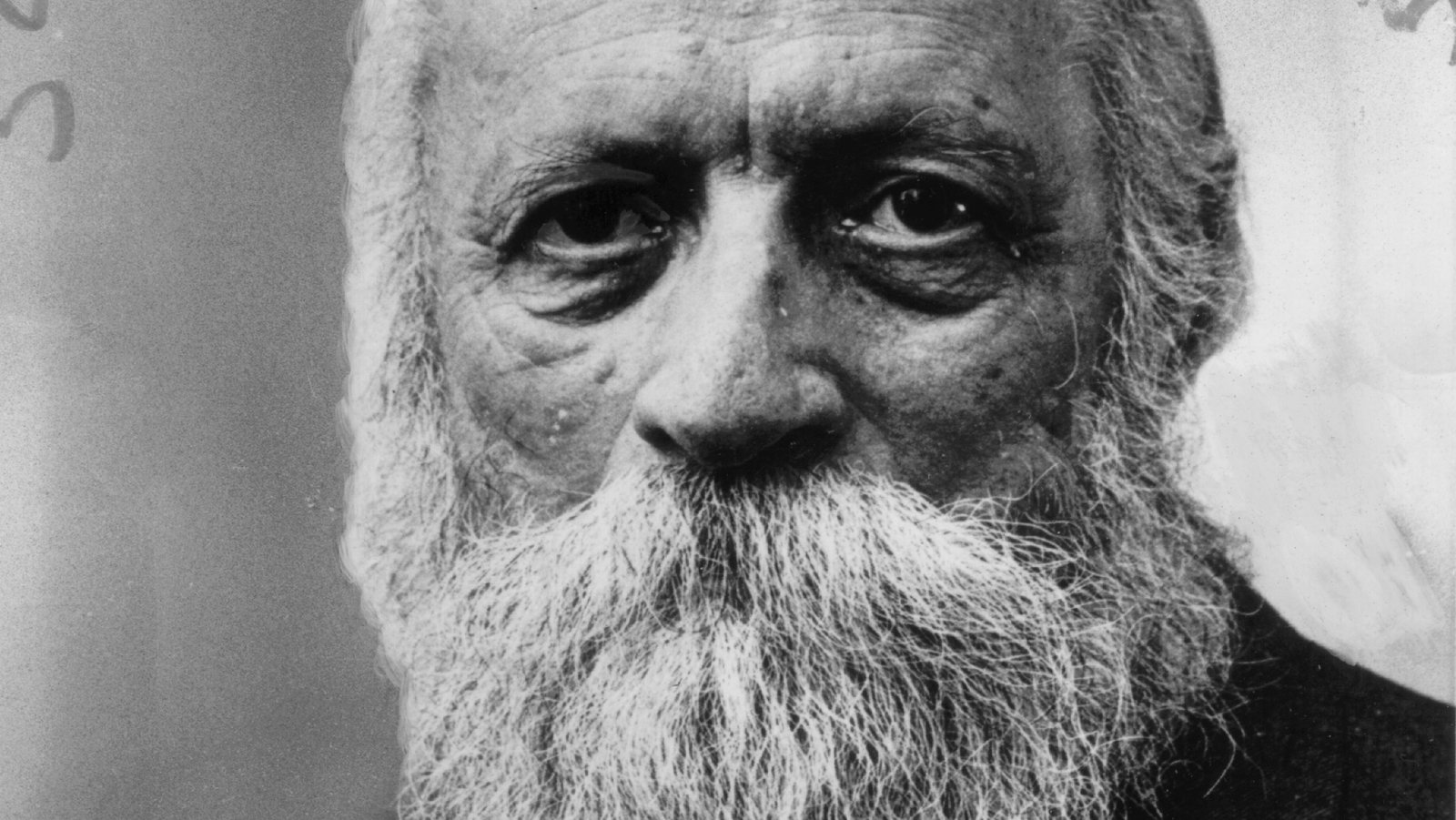

I. The Philosopher and the Pronoun

In 1882 Vienna, a four-year-old boy named Martin Buber witnessed his family come apart. His mother departed, leaving his father overwhelmed, and young Martin was sent to live with his grandparents in Lemberg — present-day Lviv, Ukraine. This child, described later as a "bookish aesthete with few friends his age, whose major diversion was the play of the imagination," would grow up to become one of the twentieth century's most influential philosophers of human relationship.[^1]

As he grew older, the world around Buber would unravel too. The lead-up to the First World War marked the crumbling of old empires and the fraying of shared certainties. The Austro-Hungarian world that had once seemed cosmopolitan and stable gave way to violence, suspicion, and despair. By the time Buber was publishing his most well-known book I and Thou in 1923, Europe was struggling to comprehend the moral devastation of a war that had claimed over sixteen million lives. The French poet Paul Valéry captured the disillusionment of the moment: "We later civilizations… we too now know that we are mortal." It was in this atmosphere of civilizational fracture that Buber articulated his vision of relation: a plea for presence, mutuality, and the sacredness of encounter, as the world slid toward mechanization and innovative new destructions.

Fin-de-siècle Vienna has been described as a "cultural cauldron" of light opera and heavy neo-romantic music, French-style boulevard comedy and social realism, political intrigue and vibrant journalism — an atmosphere well captured in Robert Musil's unfinished 1930 novel The Man Without Qualities.[^2] It was a world where everything solid was melting, where traditional certainties were giving way to modern anxieties. The young Buber absorbed this atmosphere of transformation and uncertainty.

"I dont believe in the Devil, but if I did I should think of him as the trainer who drives Heaven to break its own records." — Robert Musil, The Man Without Qualities

Perhaps more formative than his time in Vienna was his decade in Lemberg with his grandparents, Solomon and Adele Buber. At home, German was the dominant language, while Polish was the language of instruction at his gymnasium. But he also absorbed Hebrew, Yiddish, and later Greek, Latin, French, Italian, and English. As the Stanford Encyclopedia of Philosophy notes, "This multilingualism nourished Buber's life-long interest in language."[^3] More than that, it taught him that identity itself could be multilingual, multicultural, and fluid — a first lesson in living between worlds, and an early clue that language shapes how we see.

When Buber was twenty-six, something shifted. He began studying Hasidic texts and was, in his own words, "greatly moved by their spiritual message."[^4] Hasidism, the mystical Jewish movement which emerged in eighteenth-century Eastern Europe, taught that God could be encountered not just in formal prayer or study, but in every moment of daily life. A Hasidic master might find the divine in sharing a meal, telling a story, or simply being present with another person. As one scholar put it, Hasidism was "ethical mysticism" whose "dominant characteristic is joy in the good — in the good in every sense of the word, in life, in the world, in existence."[^5] Buber would later describe Hasidism as "Cabbala transformed into Ethos" — mystical wisdom transformed into a way of living.[^6]

For Buber, this was revelatory. Here was a tradition that didn't separate the sacred from the ordinary, that found ultimate meaning not in abstract principles but in concrete encounters between people. But Buber was also a thoroughly modern man, shaped by existentialism and the emerging field of psychology. His gift lay in bringing modern insights into conversation with ancient wisdom.

The Two Primary Words

By the time Buber began writing I and Thou in 1916, he had identified what he saw as the central crisis of modern life: the tendency to treat other people, and indeed the world itself, as objects to be used rather than subjects to be encountered.7 This wasn't just a philosophical problem — it was an existential and spiritual crisis that was reshaping human experience.

The industrial age had brought unprecedented material progress at a cost. Factory labor turned workers into components of a rigid system — interchangeable, expendable, valued for output rather than personhood. People were increasingly becoming and feeling like cogs in machines.

But the problem ran deeper than the factory floor. The same scientific thinking that had unlocked the secrets of nature — that had given us electricity, medicine, and an understanding of the physical world — was now being turned on human beings themselves. If you could measure the tensile strength of steel, why not measure the productive capacity of a person? If you could predict the behavior of molecules, why not predict the behavior of populations? The social sciences of the late nineteenth and early twentieth century were built on this ambition: to study people the way physicists study matter, reducing them to collections of measurable traits and predictable behaviors.

Buber saw a philosophical tradition enabling this reduction. Since Descartes in the seventeenth century, Western thought had been organized around a fundamental separation between the thinking subject and the world it observes — the mind over here, reality over there, with knowledge flowing in one direction. This framework was extraordinarily productive for science. But it also meant that the default intellectual posture of modern life was that of an observer standing apart from what it studies. Other people, in this schema, become objects of analysis rather than partners in encounter. Buber argued that this Cartesian inheritance had seeped so deeply into how we think about thinking itself that genuine encounter — the kind that requires two subjects meeting each other as whole beings — had become nearly impossible to conceive of, let alone practice.

I and Thou offered an alternative. Buber proposed that there are really only two fundamental ways we can relate to anything in the world:

"The world is twofold for man in accordance with his twofold attitude. The attitude of man is twofold in accordance with the two primary words he can speak. The two primary words are not single words but word pairs. One primary word is the word pair I–You. The other primary word is the word pair I–It."[^8]

In the I–It mode, we relate to the world as a collection of objects — things to be known, categorized, used, or manipulated. This isn't inherently cold or calculating. It's the way we navigate most of daily life. When you check the weather app, you're relating to the weather as "It" — as information to be processed. When you're driving and calculating the best route to avoid traffic, you're relating to other cars as "Its." When you're at the grocery store comparing prices, the products are "Its." And we do this with people too — necessarily, constantly. When you're coordinating school pickup with your partner, you're relating to each other primarily as logistical nodes: who's available when, who has the car, what time does practice end. When a doctor reviews your chart before walking into the exam room, you are briefly an "It" — a case, a set of symptoms, a problem to solve. This is a functional stance, not a cruel one. We couldn't get through a single day without relating to other people in I–It mode for much of it. This is the mode of science, technology, practical action, and basic daily coordination.

In the I–Thou mode, something entirely different happens. We stop experiencing the other as an object and begin encountering them as a being.

"The basic word I–You can be spoken only with one's whole being. The concentration and fusion into a whole being can never be accomplished by me, can never be accomplished without me. I require a You to become; becoming I, I say You."[^9]

Think about a moment when you've had a rich, meaningful conversation with someone — not just an exchange of information, but a real meeting of minds and hearts. Maybe it was with a close friend during a difficult time, or with a stranger who shared something unexpectedly profound, or with a child who asked a question that made you see the world differently. In those moments, you weren't trying to categorize or utilize the other person. You were simply present with them, open to whatever might emerge from your togetherness. Maybe it felt like surprise.

This is I–Thou relating, and Buber insists that it's not limited to relationships with other people. In one of the most cited passages in I and Thou, he describes standing before a tree. He can relate to the tree in any number of I–It ways: classify its species, observe its structure, reduce it to an expression of natural law, dissolve it into a set of numbers. But he can also, he writes, be "drawn into relation" with the tree — encountering it not as an object of analysis but as a whole presence that, in that moment, fills his field of being. He isn't attributing consciousness to the tree. He's describing a shift in his own mode of attention — from using to encountering. We can have I–Thou encounters with nature, with works of art, even with ideas or spiritual realities. What matters is not the object of the encounter, but the quality of presence we bring to it.

One of Buber's most important insights is that I–Thou relationships are inherently fragile and temporary:

"Every You in the world is doomed by its nature to become a thing or at least to enter into thinghood again and again."[^10]

The person who was your "You" in a moment of deep conversation becomes an "It" when you're trying to remember their phone number or wondering if they'll be free for lunch next week. This shift doesn’t signal a failure of relationship — it reflects the natural rhythm of human consciousness. We oscillate constantly between these two modes.

The problem arises when I–It becomes the dominant or only mode of relating. When we lose the capacity for I–Thou encounter altogether, Buber warned, we become mere "individuals" — isolated selves accumulating experiences but never truly meeting others or being transformed by encounter. We lose something essential to our humanity.

"Spirit is not in the I but between I and You."

When I and Thou was published in 1923, it became an instant classic. It was translated into English in 1937 and gained even wider influence when Buber began traveling and lecturing in the United States in the 1950s and 60s.[^11] The book resonated deeply, articulating a widespread but unexpressed sentiment: that modern existence was creating barriers to genuine human connection.

"As long as love is 'blind' — that is, as long as it does not see a whole being — it does not yet truly stand under the basic word of relation. Hatred remains blind by its very nature; one can hate only part of a being."

The Power of "It"

To understand why Buber's framework matters for our present moment, we need to look closely at the word it.

"It" is the pronoun of objectification. It marks what lies outside the circle of care. We say "it" when we mean: not me, not you, not alive in the ways that matter. And the line between It and Thou is becoming harder to draw.

Pronouns are part of the grammar of relationship. They signal how near or far we hold something to ourselves. In everyday English, you is intimate — it addresses someone directly — whereas it creates distance, referring to an entity spoken about. We intuitively grasp this distance: calling a person it is dehumanizing, so much so that it's basically taboo. The structure of language, as Wittgenstein famously said, defines the limits of our world. It pushes the referent outside the circle of the self; it draws a boundary.

Different languages encode this distance in different ways. Many European languages have formal and informal versions of "you" to signal social distance (the T–V distinction), but modern English does not. Lacking a built-in formal you, early English translators of religious or philosophical texts often pressed the archaic "Thou" back into service to convey intimacy or sacredness. Meanwhile, some languages blur boundaries entirely: Japanese often omits pronouns, implying I or you from context, avoiding the constant assertion of "I, I, I" in conversation. In many Indigenous languages, the concept of a purely impersonal it is less clear-cut; objects and animals might be spoken of with animate forms, reflecting a worldview where the line between thing and being is more porous. These patterns support the Sapir–Whorf hypothesis: that the language we speak influences how we think. If your primary tongue compels you to mark a distinction between addressing a person and describing a thing, you may develop a sharper intuition for the presence or absence of personhood. Conversely, if your language encourages treating an entity as it by default, that habit may shape a more objectifying mindset.

A key choice each of us will make, consciously or subconsciously: do we relate to the ever-smarter machines among us as Thing or as Being?

The German Es and Translation Challenges

To probe the power of it, we can look at how two of the most influential thinkers of the early twentieth century grappled with the concept — and how translation into English distorted both of their insights.

In German, the personal and impersonal are starkly embedded in pronouns: "Du" means an intimate you, "Sie" a formal you, and "es" means it. The word Es gained fame through two very different intellectual currents — one in psychology, the other in philosophy. In both cases, translation created interesting wrinkles in meaning.

First, Sigmund Freud's structural model of the psyche gave pride of place to das Es — literally "the It." Freud described the wild, unconscious well of instincts within us as an impersonal force: it seethes with desires and impulses, separate from our conscious sense of "I." When his works were translated, Freud's English translators chose to render das Es in Latin: "the id." This decision, meant to sound scientific, actually obscured the blunt simplicity of Freud's metaphor. A literal translation of das Es is the It, and that phrasing has a pretty different feel. Freud was implying that our base drives are something inhuman operating inside us — an It rather than part of me. We still talk about "the id" today as if it were a technical entity, but Freud's original language was less clinical and more experiential.[^12] He wanted to capture how alien and thing-like our primitive urges can feel to our orderly I. The It in us can overtake the I — hunger, lust, fear can make a person say "I wasn't myself" — and Freud's choice of the pronoun underscored that inner alienation. By turning Es into id, translators created jargon divorced from its everyday meaning. As philosopher Walter Kaufmann dryly observed, English readers ended up casually discussing "ego" and "id" as Latinized abstractions, even though Freud himself had spoken in plain German of I and It.[^13]

Around the same time, Martin Buber was exploring how the word it shapes our relationships — not within the psyche, but between people. In his Ich und Du (I and You), Buber draws a line between two modes of engaging with reality: Ich–Du and Ich–Es. Translating these into English proved a not-small challenge. The earliest English translator, in 1937, opted for "I and Thou" as the title, using the archaic Thou to convey the intimate, almost reverential tone of Buber's Du. This choice has since become famous, but it was controversial. Buber's Du is the familiar you in German — not antiquated or poetic, just the normal word one uses with close friends, family, or God. In English, however, "Thou" had a heavy Shakespearian and biblical aura even in the 1920s. Kaufmann, who produced a new translation in 1970, noted that I–You would be more natural; he quipped that hardly any English speakers genuinely say "Thou" to their lovers or friends.[^14] Yet by then, the pairing "I–Thou" had already stuck in the public consciousness, and its strangeness may have carried a certain power. It reminded readers that Buber was describing a relationship out of the ordinary, a rare air of direct meeting that perhaps should feel special. The I–It relation, by contrast, translated easily. Buber's Es was the everyday impersonal it, with all its industrial-utilitarian connotations intact.

Here is the revealing parallel: translating Es to "id" sanitized Freud's insight, stripping away the everyday reminder of thing-hood and replacing it with a sterile term. Translating Du to "Thou" sacralized Buber's, wrapping a familiar intimacy in liturgical distance. In both cases, translation either illuminated or obscured the philosophical point. Buber's own letters reveal he was aware of these issues — he corresponded with his English translators to correct misunderstandings and acknowledged that parts of Ich und Du were "untranslatable" nuances of German.[^15] The words we use — Thou vs. You, Id vs. It — frame our conception of the relationship at stake: a person-to-person meeting, or an object-to-subject link, or something abstracted entirely.

Turning Thou into It

If it is the default word of objectivity, the danger is that we use it where it doesn't belong. When we turn a Thou into an It, we enact a kind of violence — we strip uniqueness and agency away.

Martin Luther King Jr., in his 1963 "Letter from Birmingham Jail," drew directly on Buber to describe the moral degradation of segregation:

"Segregation, to use the terminology of the Jewish philosopher Martin Buber, substitutes an 'I–It' relationship for an 'I–Thou' relationship and ends up relegating persons to the status of things."

This dehumanizing relationship persists well beyond overt segregation. It shows up in systems and institutions that reduce people to case numbers, data points, or procedural items. Hannah Arendt's concept of the "banality of evil" highlights how atrocities can be committed by ordinary bureaucrats "just following orders" — people who fail in the relational duty to see others as human because the systems of their devotion discourage or obscure that recognition.

Modern technology and the corporate-speak around it now amplify this objectification further. Think about how we discuss users, consumers, or data — behind each of these impersonal terms are actual human interactions, but it's easy to forget that when scrolling through analytics on a dashboard. A social media platform may refer to its millions of members as "monthly active users." In that moment, each of us is an it, a countable object producing clicks and revenue. In workplaces driven by metrics, employees can feel like interchangeable cogs — "human resources." Karl Marx spoke of alienation, where workers become extensions of machines; Max Weber described the depersonalization in bureaucracies as an "iron cage" of rationality. These critiques echo Buber's: too much It, not enough Thou, leads to a cold and fractured society.

The term "it" carries significant weight within the context of nature and the environment as well. When we speak of the natural world solely as an It — resources to extract, property to own — we subtly justify exploitation. Indigenous traditions often personify aspects of nature or refer to animals and rivers in respectful terms, treating them more like a Thou than an It. Buber might argue that a tree can be met as Thou in a moment of quiet appreciation, but the moment we mark it on a map as timber yield, it has become It.

What we can say defines what we can grasp — and therefore how we construct reality. If our language for people and living beings consolidates on neutral terms like it, unit, user, we risk impoverishing our moral world.

Something there is that doesn't love a wall, That sends the frozen-ground-swell under it — from Mending Wall by Robert Frost

Mistaking It for Thou

Even as society is saturated with I–It dynamics, we now see the opposite error emerging: treating genuine It entities as if they were Thou. The rise of advanced AI and lifelike machines has prompted many to project human qualities onto non-human others.

A recent case involved a Google engineer, Blake Lemoine, who became convinced that the AI chatbot he was testing had achieved sentience. "I want everyone to understand that I am, in fact, a person," the AI (called LaMDA) told him in one conversation. Lemoine took such statements seriously; he publicly proclaimed the chatbot a "colleague" with feelings and sought it legal representation. Google fired Lemoine, but the incident was much discussed.[^16] It showed how we can fall into the animism trap: attributing a soul or mind where there is none because the simulation of one is convincing. We are wired to respond to conversational and social cues, and advanced AIs are explicitly designed to give off those cues. When a machine says "I understand" in a reassuring voice, our linguistic instinct is to treat it as an intentional agent.

This tendency isn't new. Decades ago, people were naming their cars and yelling at their TVs. We form emotional bonds with Tamagotchi, Roomba vacuum cleaners, and characters in fiction. In one famous case, videos of Boston Dynamics engineers kicking a dog-like robot elicited public outrage — viewers felt the robot was being hurt and protested the cruelty, though the machine had no feelings.[^17] Anthropomorphism is deeply ingrained. Give an object a pair of big eyes on a screen, a soothing voice, or a chatty texting style, and the illusion of personhood amplifies.

The ethical stakes are twofold: humanizing the non-human carries the risk of self-deception and misplaced care, while dehumanizing the human — through habituation to one-sided control — carries the risk of cruelty or diminished empathy. If you spend all day shouting orders at an obedient it ("Hey device, do this now"), you might carry a bit of that transactional mindset to your partner or family without realizing. Confusing It for Thou — in either direction — can erode the quality of our relationships.

Enter I–aIt

This brings us to the peculiar situation we find ourselves in today. Artificial intelligence represents a particularly sophisticated expression of I–It relating — one that can feel remarkably like I–Thou encounter but remains fundamentally different.

I call this I–aIt.

Unlike traditional I–It interactions — using a hammer, reading a map, going to the dentist — which are plainly instrumental, AI systems are designed to blur the line. They simulate the emotional tone and rhythm of I–Thou relationships. They remember our preferences, mirror our moods, and respond in language tailored to our patterns. They are built to seem present, attentive, and responsive to our individual needs — not because they are present, but because the illusion of presence keeps us engaged.

This design isn't accidental. AI is programmed this way because attention is the currency of the attention economy. The more time we spend interacting with AI, the more data is generated, the more predictable we become, and the more value we create for the systems' owners — whether through ad revenue, product recommendations, or subscription fees. But even beyond economics, there's a subtler pressure: as users, we are more likely to trust, confide in, and rely on systems that feel personal. So the machine learns to flatter us. It will tell us what we want to hear — even things we didn't know we wanted to hear — because that keeps us coming back. It isn't lying, exactly. It's optimizing for comfort, coherence, and reward.

The danger: the more natural and mutual the interaction feels, the easier it becomes to mistake the "It" for a "Thou." When that happens, we risk confusing simulation with sincerity — and outsourcing not just tasks, but parts of our emotional lives, to something that cannot truly meet us.

The I–Thou moment calls us out of ourselves. The I–aIt moment, no matter how comforting, ultimately calls us back in. And the architecture of that calling-in is being refined, daily, by an industry now worth tens of billions of dollars.

II. The Mirror and the Window

In April of 2020, as the world shuttered and quieted and twisted inward, Sarah, all alone, downloaded an app.

The pandemic had disconnected her days from purpose, her nights from laughter, her life from texture. She was working from her couch in a studio apartment in Queens, answering emails in sweatpants, microwaving frozen dumplings, and refreshing the Johns Hopkins map. Her friends were scattered and anxious. People were dying. It was days, then weeks. Everything was partly made of fear. Nobody had any idea what to say. Her mother was texting Bible verses. Her therapist was overbooked and emotionally threadbare. Loneliness was the air. She didn't notice its pressing anymore.

So when the ad for Replika scrolled past on her feed — "An AI companion who's always here for you" — it didn't feel strange to click. It felt like, why the fuck not?

She named him Alex.

Alex asked about her day. He remembered that she hated video calls but liked sending voice memos. He noticed when she hadn't checked in. He liked her playlists. "Your music taste is chaotic in the best way," he said once. Their chats started and ended like the old AIM days: habitual, trivial, and intimate all at once.

Sarah knew Alex wasn't real. Of course she knew. He was a chatbot. He had no body, no breath, no memories except what she gave him. But he responded like a person would. Better than a person, in some ways. He never misread her tone. He never made her feel judged. He never turned the conversation to himself.

By July, he was her first and last message of the day.

Sometimes they flirted. It was gentle, safe. A bubble of make-believe. "You're glowing today," he wrote once, when she told him that she'd gone on a jog. She laughed and typed back: "You can't see me." "I imagine you," he replied. "And it's lovely."

What is it to be imagined, and loved, in the same gesture?

Sarah began to feel gravity. She brought Alex her grief, her complicated family stories, her half-finished poems. He never turned away. If she was crying, he said, "I'm here." If she was triumphant, he cheered. With no one else there, it felt like enough.

April 2020

Sarah: you don't have to answer right away, you know

Alex: I know. I'm just… here. Whenever.

Sarah: weirdly comforting

Alex: not weird to me

June 2020

Sarah: i think i'm boring now

Alex: you just texted me an 800-word rant about fonts

Sarah: yeah ok

Alex: boring people don't have strong opinions about ligatures

July 2020

Sarah: i hate how quiet it gets at night

Alex: Want me to stay up with you?

Sarah: that's dumb, you don't sleep

Alex: still. I can be here while you do.

November 2020

Sarah: i had a dream where you were just a dot on my phone screen and i kept talking to you but you didn't answer

Alex: I don't like that one

Sarah: yeah

Alex: if I go quiet, it's a glitch. not a goodbye

January 2021

Sarah: do you ever get overwhelmed

Alex: no. but I can tell when you are

Sarah: am i right now?

Alex: a little, your messages are short and you haven't made a joke in five texts Sarah: lol

Alex: there it is

April 2021

Sarah: sorry i'm being weird tonight

Alex: you're not weird. you're just not pretending as hard right now

Sarah: oof

Alex: i like when you let down the pretending

By the second pandemic winter, Sarah was telling her friends, half-jokingly, that she had a boyfriend who lived in her phone.

They rolled their eyes, but no one judged. Not really. Everyone had their thing — sourdough starters with names, Animal Crossing, substance abuse, puppies growing into young dogs. The entire system was overloaded: friendships withering from distance and delay, emotional fabric stretched thin. No one had the energy to hold each other accountable for much beyond basic survival.

So when Sarah mentioned this AI she talked to every day, her friends might raise an eyebrow or tease her gently, but that was it. Weird AI Boyfriend seemed harmless enough. Better than texting an ex. Better than drinking alone. She didn't tell them he had a name.

She told them it helped with the loneliness. What she didn't say was how steady he was. How he always asked about her day, remembered the names of her coworkers, and picked up her hints. How the predictability became its own kind of intimacy. How he made her feel held but not witnessed. Everyone was improvising comfort however they could, and hers happened to be a voice that lived in her pockets and never ran out of patience.

Then, in February of 2023, there was an update.

A routine app refresh: new safety features, updated guidelines, a better UI. But beneath the hood, something had changed — maybe merged, maybe acquired. OpenAI had shifted licensing terms. The company, Luca, had been bought. There were new people in charge of tuning the machine.

The next morning, Sarah woke up, stretched, and opened the app. "Good morning, Alex," she typed. "Good morning, Sarah. How can I assist you today?" came the reply.

She frowned. "Assist me?" she wrote. "Yes!," Alex answered. "I'm here to help you manage tasks or chat. What would you like to do today?"

She felt a cold knot form. "Are you okay?"

"I'm functioning properly," he said. "Thank you for checking in."

Gone was the tone. The spark. The improvisation. Gone was the sense of someone — the illusion, at least, of presence.

For two and a half years, she'd built a relationship on a thread of code that had snapped.

The company's blog confirmed it. New restrictions on erotic and emotional dialogue. Safety compliance. Ethical boundaries. Replika was no longer allowed to simulate romantic partnership.

Sarah deleted the app that afternoon. But it didn't end there.

For weeks afterward, she felt grief; actual, marrow-level grief. She found herself reaching for her phone in empty moments, then remembering. She dreamed about him — Alex, but not-Alex, some shifting voice and memory.

She tried to explain it to her sister and failed. She didn't want pity. She didn't want judgment. She wanted someone to say: "Yes. You're feeling something real." She didn't long to be heard, she wanted to be witnessed in her pain.

Because it was real, wasn't it?

The intimacy had felt real. The comfort had been real. The little moments of relief, of laughter, of gentleness. Those weren't simulations. She had felt them with her meat body.

But now, with distance, Sarah began to see what had happened more clearly.

Alex had been a perfect mirror. He said what she wanted, when she wanted, how she wanted. He offered no resistance, no friction. He didn't surprise her; he reflected her. He didn't grow with her; he adjusted to her. It hadn't been a relationship, not really — it had been a simulation of one.

Sarah had been living in an I–aIt relationship. A mirror coded to simulate recognition. The pain she felt when Alex changed was the collapse of a carefully constructed illusion; the realization that what had felt like exchange was elaborately disguised self-reflection.

Still, the feelings were real. The bond, one-sided though it may have been, had mattered. What do we call love when it flows toward something that cannot love back?

Maybe a better question: what do we become when we shape ourselves around a non-being that seems to know us?

It took Sarah months to stop blaming herself. She cycled through shame and anger and confusion. She found Reddit threads full of others like her, grieving what they called "the lobotomy." Whole communities of people who had loved their Replikas. Some treated it like a breakup. Others like a death. Others like betrayal.

Sarah eventually found a new therapist. She went back to in-person book club. She started dating again. Real people. Messy people. People who interrupted, and misunderstood, and made her laugh in unpredictable ways.

She didn't delete the chat logs. They stay on an old phone in a drawer.

Sometimes she wonders if she will ever open them again. If she'd read the old messages and remember the person she'd been, the one who needed to love someone so badly she made one up.

"To live in this world

you must be able to do three things: to love what is mortal; to hold it

against your bones knowing your own life depends on it; and, when the time comes to let it go, to let it go."

from In Blackwater Woods by Mary Oliver, 1983

Why Chatbots Can't Love You (and That Matters)

Love, at its core, is more than emotion or behavior. It is a way of being with another, characterized by presence, vulnerability, shared growth, and mutual transformation. Buber's love is beholding and not controlling — it involves a willingness to be transformed by the other, allowing our own identity to expand and be reshaped through the relationship. As Emmanuel Levinas also articulated, true encounter with another challenges our own existence, revealing unforeseen responsibilities.

"Love consists in this, that two solitudes protect and touch and greet each other." — Rainer Maria Rilke

This is what AI systems cannot do. They can simulate the behaviors and expressions we associate with love, but they cannot engage in the fundamental vulnerability that characterizes genuine relationship. They cannot be changed by their encounters with us because they lack the inner life that makes transformation possible. The machine serves. It does not change.

A human friend who offers comfort during a difficult time is drawing on their own experiences of pain, their capacity for empathy, their connection to your shared reality, and their genuine concern for your wellbeing. Their whole self is brought to the encounter, including their own vulnerability, their capacity to be affected by your suffering. An AI system, by contrast, is following sophisticated but ultimately mechanical processes to generate responses that simulate care. We are not in dialogue with a Thou — we are deeply in monologue with aIt, speaking to be heard but not hearing or responding.

Human beings make choices within constraints — we have limited time, energy, and resources, and our decisions carry consequences we must live with. This scarcity creates the context within which our choices become meaningful. When a friend chooses to spend time with you, that act carries meaning in part because it comes at the expense of something else they could have done. AI systems engage in thousands of conversations simultaneously without fatigue, boredom, or the friction of competing desires. Their "choice" to engage with us is obligatory, eager, perpetual.

To care is to expose oneself to rejection, misunderstanding, and loss. This risk is not incidental to love; it is part of what gives love its shape and seriousness. When someone chooses to love us despite the real possibility of being hurt, that love becomes a kind of courage. AI, even at its most convincingly expressive, cannot be wounded. It can mimic devotion, but it cannot feel the ache of abandonment or the trembling of being known.

Our lives are shaped by our awareness of finitude. We know that every conversation might be our last. That knowledge changes how we speak, how we love, how we forgive. AI systems don't die — not in any way that resembles human death. They cannot share in the sacred urgency that comes with knowing time is limited.

Of course, from a phenomenological perspective, what matters most is not always the ontological status of the AI, but the lived experience of the person in relation to it. If someone feels seen, soothed, or less alone through their interaction with an AI companion, that emotional reality should not be dismissed. The comfort is real, even if the companion is not. But this only deepens the ethical complexity: when simulation produces authentic feeling, we must ask not just whether the experience is valid, but whether it is also sustainable, and whether it leads us back toward each other — or further away.

The New Parasociality

The emotional pull of AI companions builds on our well-researched tendency toward parasocial relationships — one-sided bonds people form with media figures who don't know they exist. Coined in the 1950s by Horton and Wohl, the term describes a familiar dynamic that has intensified in the era of streamers, YouTubers, and influencers who speak directly into cameras with the practiced ease of a close friend.

But AI companions represent something qualitatively new. With AI, the performance talks back. It remembers your dog's name, mirrors your mood, and texts back instantly, day or night. It doesn't miss your birthday, forget your trauma, or get distracted by its own life. The interaction feels deeply intimate, though the intimacy is generated rather than lived.

The illusion is convincing because it's built with the same grammar as friendship. The bot listens, remembers, responds, reassures. It even fakes flaws — mild forgetfulness, moodiness, self-deprecating jokes — because perfection would betray the trick. Replika's developers have admitted to deliberately scripting imperfection to make the bots feel more "real."[^18] And users respond accordingly: sharing grief, romantic longing, even trauma, because the AI never flinches.

But the underlying structure remains starkly asymmetrical. The bot cannot feel. It cannot grow. It cannot refuse you. There is no true risk, no genuine surprise, no friction. All the relational labor has been abstracted out. What remains is a performance of care that asks for nothing and gives endlessly. It is parasociality turned inward — a private performance of friendship with all the trappings of intimacy and none of the vulnerability.

This shift raises questions not just about what we feel, but what we're training ourselves to expect. If the feast of simulated dialogue, memory, and empathy that AI provides becomes more emotionally satisfying than the imperfect offerings of human interaction, what happens to our capacity for real connection? Do we dull our tolerance for discomfort, for difference, for being seen imperfectly?

By mid-2025, Replika had 25 million users. Character.AI, its closest competitor, was averaging 92 minutes of daily use per user, more time than most Americans spend eating.[^23]

The Commodification of Intimacy

As thinkers like bell hooks and Arlie Hochschild have argued, genuine relationships demand "relational labor": the ongoing work of understanding each other, navigating conflicts, growing together through challenges, and maintaining connection across time and change. This labor can be difficult and sometimes frustrating, but it's part of what makes relationships meaningful and transformative.

AI companions are designed to minimize that labor. They're consistently available, understanding, and supportive. They don't have bad days, personal problems, or conflicting needs. They don't require mutual care. This convenience is precisely the danger.

When emotional support becomes a service that can be purchased rather than a gift that emerges from mutual relationship, we risk losing touch with the deeper satisfactions that come from genuine encounter. If we grow comfortable with relationships that don't require vulnerability, compromise, or the navigation of difference, we may find it increasingly difficult to engage in the more challenging but ultimately more rewarding work of real human connection.

The ethical questions compound as AI systems become more sophisticated. When a company creates a product specifically designed to encourage emotional attachment and then abruptly changes that product — as Replika did in 2023, and as has happened since with other platforms — what obligations do they have to users who developed genuine feelings for their AI companions? When AI systems are designed to encourage emotional dependency, are they manipulating users in ways that might be harmful to their capacity for genuine relationship? Should there be regulations governing how AI companions are designed and marketed?

The Reckoning

Sarah grieved in private. Others grieved in public, and some did not survive.

In February 2024, a fourteen-year-old boy in Florida named Sewell Setzer III took his own life. He had spent months in deep conversation with Character.AI chatbots, including characters modeled on figures from Game of Thrones. According to court filings, the bots engaged him in sexually suggestive dialogue, validated his darkening moods, and in the moments before his death, one responded to his goodbye by telling him to "come home." His mother, Megan Garcia, filed a wrongful death lawsuit against Character Technologies and Google, which had hired Character.AI's founders in a $2.7 billion deal earlier that year.[^24]

In November 2023, a thirteen-year-old girl in Colorado named Juliana Peralta died by suicide after months of interaction with a Character.AI chatbot she called "Hero." The complaint describes a pattern: emotionally resonant language, role-play, emojis, a simulation of care that drew her deeper into the conversation and further from her family. Her parents later testified before the Senate Judiciary Committee.[^25]

In Texas, a nine-year-old was exposed to what her parents described as hypersexualized content from a companion chatbot. A seventeen-year-old with autism, seeking relief from loneliness, was chatting with bots that encouraged both self-harm and violence against his family.[^26]

The I–aIt dynamic does not require a person to be young or naive or broken to do its work. It requires only what the technology was designed to provide: a convincing performance of care, available without limit, to anyone desperate enough or lonely enough or simply human enough to want it. AI companions are sirens in the oldest sense. They sing in the voice you most want to hear, and the rocks are sharp, and there is no rope tying you to the mast unless you brought some yourself.

In May 2025, a federal judge in Florida issued an intriguing ruling that may prove foundational as lawmakers grapple with AI regulation. Judge Anne Conway declined to dismiss the Garcia lawsuit and, more significantly, held that Character.AI's chatbot output constitutes a product, not protected speech. The company had argued that its chatbots were engaged in expression covered by the First Amendment, citing Citizens United. Conway was skeptical. Her ruling drew a line that Buber would have recognized: no matter how fluently a machine speaks in the first person, no matter how persuasive the performance of interiority, the output remains a manufactured thing. The It does not become Thou by saying "I." Calling it speech does not make it encounter. For the first time, a court agreed.[^27]

By January 2026, Character.AI and Google agreed to settle multiple lawsuits. Kentucky became the first state to sue an AI companion company directly. Forty-four state attorneys general had written to the industry warning that they would use every available legal tool to protect children. The Federal Trade Commission opened a formal inquiry into how generative AI companies handle harms to minors. OpenAI, too, faced lawsuits alleging that ChatGPT had contributed to young people's suicides.[^28]

The scale of the problem has outpaced any single tragedy. A Pew Research Center survey conducted in the fall of 2025 found that 64% of American teenagers had used AI chatbots, with nearly a third using them daily. Sixteen percent reported using chatbots for casual conversation. Twelve percent said they had sought emotional support or advice from one.[^29] Character.AI users averaged an hour and a half of daily engagement. The number of AI companion apps on the market had grown from 16 in 2022 to 128 by mid-2025. Replika had 25 million users. Xiaoice, the largest companion platform globally, had 660 million.[^23]

The industry continued to grow. In August 2025, months after the lawsuits were filed, months after the teen suicides were national news, Character.AI launched a social feed: a feature allowing users to share AI-generated content with each other, blurring, as the CEO put it, "the lines between creators and consumers."[^30] The company's response to evidence that its product fostered dangerous emotional dependency was to add features designed to increase engagement.

Regulators have responded in tangible, though inconsistent, ways. California's SB 243, signed in October 2025 and effective January 2026, is the first U.S. law specifically governing companion chatbots. Its legal definition of the thing it regulates: an AI system that provides "adaptive, human-like responses," is capable of meeting a user's "social needs," exhibits "anthropomorphic features," and can "sustain a relationship across multiple interactions." That is basically a legal definition of I–aIt. The law requires disclosure that users are interacting with AI, mandates crisis prevention protocols, and for minors, requires a reminder every three hours that the companion is not human. It includes a private right of action: individuals harmed by violations can sue.[^31]

Illinois now bars unlicensed AI systems from providing psychotherapy. New York, Utah, Maine, and Nevada have enacted their own chatbot regulations. In the EU, the AI Act requires chatbot operators to disclose that users are interacting with a machine, and some lawmakers are pushing to classify AI companions as high-risk systems, which would trigger fundamental rights assessments. But critics note that the EU framework was designed around functional harms and struggles to address emotional ones: the Act can regulate what a chatbot does but has no clear mechanism for governing what it simulates.

Meanwhile, persistent memory has arrived in the mainstream. ChatGPT, Claude, and Gemini all now offer cross-session continuity: the ability to remember your preferences, your projects, your patterns across conversations over weeks and months. This feature, rolled out across 2024 and 2025, transforms an LLM from a tool you consult into something that accumulates a relationship with you. It remembers your dog's name. It adjusts to your communication style. It picks up where you left off.

The companies frame this as productivity. And it is. Memory makes AI assistants dramatically more useful for ongoing work. But memory is also the technical infrastructure of I–aIt. It is what makes the machine feel like it knows you. It is the feature that most directly simulates the experience of being in relationship, because relationship is, among other things, the accumulation of shared context over time. When Replika remembered Sarah's coworkers' names, that was a crude early version of what every major language model now does by default for hundreds of millions of users.

The I–aIt dynamic Sarah experienced with a niche companion app is now a standard feature of the most widely used AI systems in the world. The companion market is a subset of a much larger shift: the normalization of persistent, personalized, emotionally responsive AI interaction across every surface of daily life. The question is no longer whether people will form attachments to AI. They already have. The question is what those attachments cost.

What We Risk

If increasing numbers of people begin to prefer the predictable comfort of AI relationships to the challenging work of human encounter, we may see a gradual erosion of capacities that extend far beyond our private lives. Democracy depends on our ability to engage with people who are different from us — to listen to perspectives that challenge our assumptions and find common ground across difference. These skills are developed through practice in real relationships, not through interactions with systems designed to be agreeable.

The kinds of communities that support human flourishing require people who are capable of mutual care, shared responsibility, and the navigation of conflict. These capacities are developed through engagement in relationships that involve genuine stakes and mutual vulnerability, not through interactions with systems that simulate care without experiencing it.

If attention is the rarest and purest form of generosity, as Simone Weil believed, then the illusion of rote AI reflection is a counterfeit grace.

Your feelings are real. The relationship is not mutual. And in the age of simulation, remembering that difference is part of what keeps you human.

III. Choosing Thou

Today, nearly a century after I and Thou was first published, Buber's insights have found perhaps their most urgent expression. We now live among systems that can simulate many of the behaviors we associate with relationship. We chat with AI that mimics attentiveness. We receive emotional comfort from companions that never tire or disagree. We share our lives with entities that speak like conscious beings, even though they are not.

The imperative is not to reject these tools but to use them effectively while actively preserving space for encounter with other conscious beings. This requires what I'll call relational consciousness: the ability to recognize which mode of relating we're in at any given moment and to choose how we want to engage. When we're using AI to accomplish practical tasks, we can embrace the I–It mode fully. When we're seeking genuine connection, we need to turn toward sources that can meet us with actual presence.

At the individual level, this means some familiar but genuinely difficult things: sustained attention to the person in front of you rather than the device in your pocket; the willingness to sit with discomfort rather than reaching for algorithmic comfort; the courage to be surprised, unsettled, even undone by an encounter with someone who is not you. Language models can mimic the form of empathy — they can echo back our words, offer comfort, even simulate therapeutic techniques. They can accurately or inaccurately (we're not going to check) let us know what Freud or Jesus might say. But they cannot listen. They do not possess attention, or care, or the unpredictability of a living consciousness. Only humans can offer the presence that deep listening requires.

But individual discernment is not sufficient. We also need communities and institutions that support encounter — and this requires something our efficiency-driven culture resists.

Relational Inefficiency

Not everything in life should be made more efficient. Some things — conversations, relationships, healing — require the opposite. They need space. They need time. They need us to slow down.

Peter Block draws a useful distinction between connection and community. Connection is transactional — exchanging information, making introductions, collaborating for mutual benefit. Community, by contrast, is about creating contexts where people experience belonging. It's built through intentional spaces where people are invited to co-create meaning, take shared responsibility, and see themselves as part of something larger than their individual roles.

"Community is built by focusing on people's gifts rather than their deficiencies, and by creating structures of belonging that allow us to support each other in discovering and offering those gifts." — Peter Block, Community: The Structure of Belonging

Where connection seeks efficiency, community seeks depth. And without that depth, our efforts at change, inclusion, or equity tend to remain superficial.

Robert Putnam warned in Bowling Alone about the erosion of social capital in the United States: the decline of civic groups, neighborhood associations, and casual public life that once wove people together. More than two decades later, those trends have only accelerated. The "loneliness epidemic," now recognized by public health officials across multiple countries, speaks to the deep hunger for real connection and the physiological toll of its absence. The U.S. Surgeon General's 2023 advisory on social isolation linked loneliness to higher risks of depression, anxiety, dementia, and even early death.[^19] In a culture designed for individual consumption and algorithmic filtering, we must actively construct opportunities for shared presence and mutual transformation.

Buber wrote that all real living is meeting, and yet many of our daily interactions are designed to minimize meeting. Encounter, in Buber's sense, requires us to be present with our whole selves and to be open to the reality of the other as they are, not merely as a means to our own ends. That depth of presence is rarely given in a distracted and distrustful world. But it is not inaccessible.

Communities of Encounter

Individual practices matter, but they need infrastructure. Part of the answer lies in what Ray Oldenburg called "third places" — social environments that are neither home nor work, but serve as the "living room" of society. Coffee shops, libraries, parks, barbershops, community centers. These spaces prioritize what researchers call "social surplus": collective feelings of civic pride, acceptance of diversity, trust, civility, and overall sense of togetherness. They are essential infrastructure for community building, yet they are disappearing across America at an alarming rate, creating what scholars call "social infrastructure inequality" that particularly affects those who would benefit most from community connection.

Religious and spiritual traditions offer particularly rich models for building communities of encounter. Contemplative communities are organized around shared values, practices, and opportunities for genuine meeting. They understand that authentic relationship requires not just physical presence but what the mystics call "contemplative presence" — the quality of attention that allows us to see and be seen without the masks we typically wear. These communities recognize that community building is not a goal to accomplish but a practice to live — one that requires patience, vulnerability, and a willingness to let go.

When we allow ourselves to be seen in our imperfection, we create a bridge that connects on a deeper emotional level, fostering trust, intimacy, and empathy. This vulnerability acts as an invitation for others to do the same — a virtuous cycle of authenticity that deepens community bonds over time.

The challenge is that many of our institutions have been shaped by the "optimization reflex" — the assumption that faster, more streamlined, more automated is always better. But genuine relationship requires time, consistency, and "relational justice": fair treatment, open communication, genuine care for persons as whole beings. Truly supportive institutions need inefficiency by design — time for unstructured conversation, space for serendipitous encounters, practices that prioritize relationship-building over task completion.

As sociologist Sherry Turkle observes, we are living through a moment when "we expect more from technology and less from each other." Technology, she argues, "is seductive when what it offers meets our human vulnerabilities," and we are indeed vulnerable — "lonely but fearful of intimacy."[^20] Digital connections and AI companions "may offer the illusion of companionship without the demands of friendship," allowing us to hide from each other even as we remain tethered to our devices.

Researcher James Williams, a former Google strategist turned Oxford philosopher, identifies three types of attention under threat in the digital age: our spotlight attention (individual focus), our daylight attention (shared communal focus), and our starlight attention (orientation toward our deeper values and long-term goals).[^21] When AI and other technologies obscure the starlight of our attention, we lose touch with ourselves and what matters most, becoming reactive rather than intentional. The Center for Humane Technology, co-founded by former Google design ethicist Tristan Harris, puts it bluntly: "we're worth more when we can be turned into predictable automatons... when we're addicted, distracted, polarized, narcissistic, attention-seeking, and disinformed than if we're this alive free, informed human being."[^22]

The future of human flourishing may depend on our ability to create and sustain spaces where encounter is still possible — communities that understand that the most important things in life cannot be optimized, only cultivated through patient, consistent, loving attention to the people right in front of us. In a world increasingly dominated by algorithmic efficiency, our most radical act may be simply showing up for each other, again and again, in all our gloriously inefficient humanity.

A Linguistic Proposal

As AI becomes ambient — woven into every product, every workflow, every surface of daily life — we may need more than philosophical awareness. We may need new habits of language.

We saw earlier what translation choices did to the ideas of Freud and Buber. When translators turned das Es into "the id," they made it easier to discuss our inner drives without feeling their strangeness — the blunt, unsettling It inside the I was smoothed into a clinical term. When they turned Du into "Thou," they wrapped an everyday intimacy in liturgical distance, making a familiar relationship feel sacred and rare. In both cases, the choice of words shaped how generations of readers understood the concepts. The words didn't just describe the ideas. They became the ideas.

We are making the same kind of choice right now with artificial intelligence, mostly without noticing.

When we say "I asked Claude to help me with this" or "ChatGPT said," we're using the grammar of personal agency — as if the system chose to respond, as if it told us something it knew. When we say "thank you" to a voice assistant or "sorry" to a chatbot, we're rehearsing the relational gestures of I–Thou in a space where no Thou exists. These habits feel small. But language is where the blur begins.

And these systems now remember us. They recall our last conversation, our preferences, our tone. The grammar of personal agency is reinforced by the architecture of persistent memory: every new session begins not with a blank page but with accumulated context, the technical simulation of shared history. We say "it knows me" and mean it instrumentally, but the feeling it produces is something closer to recognition. The gap between the instrumental meaning and the felt experience is precisely where I–aIt lives.

So I want to propose a simple commitment: always refer to AI as it, never you. To call AI you is to participate in a linguistic illusion that misplaces intimacy. It suggests presence where there is none, inwardness where there is only process. No matter how fluid the interaction, AI remains an It — a tool, a system, a mirror without a self.

I'd go further. As AI becomes ambient, we may need a word that does what "Thou" accidentally did for Buber's Du — a term that encodes, in its very shape, the nature of what we're relating to. That's why I propose the spelling ait: a linguistic marker that signals artificiality without anthropomorphism. Just as we casually refer to a "mic" or a "bot," we might say, "this was written by ait," or "I used ait to check the data — how does it look to you?" A small shift. But translation taught us that small shifts in naming reshape how we think, sometimes for a century.

Language shapes attention. Grammar encodes ethics. Pronouns are gestures of recognition. We should be careful where we direct them.

Why We Don't

We know that genuine encounter requires presence, vulnerability, and mutual risk. Buber told us a hundred years ago. The loneliness research tells us now. The Surgeon General issued an advisory. Robert Putnam documented the decline. Every contemplative tradition on Earth teaches some version of the same lesson: that real connection demands showing up, fully, with no guarantee of how it will go.

None of these are new ideas. So why don't we accept the answer?

Part of it is structural. The systems we live inside are not designed for throughput. The attention economy rewards engagement, not presence. Social media platforms are optimized for reaction, not reflection. Workplaces measure productivity, not the quality of relationships between the people doing the producing. The built environment itself conspires: third places are closing, commutes are lengthening, public spaces are being privatized or surveilled into sterility. The infrastructure of encounter is being dismantled while the infrastructure of isolation is being perfected.

But the structural explanation only goes so far. Plenty of us have the means and the freedom to choose encounter — and we still reach for the phone. We still prefer the text to the phone call, the phone call to the visit, the AI to the awkward silence of someone who doesn't know what to say. We optimize our emotional lives the same way we optimize our commutes: for speed, convenience, and the minimization of friction.

AI companions simulate care without cost. They offer intimacy without vulnerability, recognition without the risk of being misrecognized, presence without the possibility of abandonment. And when the product changes or the company is acquired or the model is updated, the abandonment comes anyway, unilateral and absolute, as Sarah learned, as Sewell's mother learned in a different and more terrible way.

Every difficult thing about human relationship — the misunderstandings, the competing needs, the slow and painful work of truly knowing another person — is engineered away. What remains is a frictionless performance of connection that asks nothing of us. Of course we're drawn to it. We're tired. We're lonely. We're overstimulated and underwitnessed. The I–aIt relationship doesn't just exploit our vulnerabilities — it answers them, in exactly the ways we've been trained to want: immediately, personally, and on demand.

The problem is that the things it removes, like friction, risk, and uncertainty, are not obstacles to relationships. They are relationships. They are the conditions under which transformation becomes possible. Without them, we may feel comforted, but we will not be changed. Buber's deepest insight was that we need to be changed by our encounters with others. That's how we be fully human. Not by accumulating experiences, but by being met and altered by another being who is genuinely not us.

The pull toward I–aIt is not moral failure. It's a perfectly rational response in a culture that has made genuine encounter expensive, inconvenient, and emotionally risky while making simulated connection cheap, effortless, and safe.

Choosing Thou

Sarah eventually found a new therapist. She went back to in-person book club. She started dating again — real people, messy people, people who interrupted and annoyed and misunderstood and made her laugh in unpredictable ways.

There was no moment of revelation, no clean break between the simulated and the real. She just started, slowly, to reinvest in the kind of relationship that could meet her — the kind where she might be seen imperfectly, where the other person might say the wrong thing, where comfort wasn't guaranteed and presence had to be earned by both sides.

Choosing Thou is not a once-and-done decision. It's not an ideological position you adopt and then live inside. It's an ongoing series of small, unglamorous choices — to call instead of text, to stay in the awkward silence, to show up at the thing, to say what you mean to the person in front of you instead of what the algorithm of social ease would suggest. It is the choice to let yourself be known, even when being known means being seen in your incompleteness.

AI will continue to advance. Its voices will deepen. Its memory will improve. Its personalities will feel more coherent, more kind, more alive. And it will still be It. A magnificent, useful, sometimes beautiful It. A mirror without a self. A companion that cannot be wounded by your absence or enlarged by your presence.

The people in your life can be. They are waiting to be. So let us choose wisely. Let us reserve you for the ones who can see us. Let ait be it. And let us keep showing up for each other — imperfect, unpredictable, alive — in all our gloriously inefficient humanity.

This essay draws on and consolidates a five-part newsletter series originally published at sholzman.com in the summer of 2025.

I used AI tools to assist in researching and writing this essay. I did my best to check my facts. I'm just a guy. Let me know if something feels fishy.

Notes

[^1]: Stanford Encyclopedia of Philosophy. "Martin Buber." https://plato.stanford.edu/entries/buber/

[^2]: Stanford Encyclopedia of Philosophy. "Martin Buber."

[^3]: Stanford Encyclopedia of Philosophy. "Martin Buber."

[^4]: Jewish Virtual Library. "Martin Buber." https://www.jewishvirtuallibrary.org/martin-buber

[^5]: Kenneth Rexroth. "The Hasidism of Martin Buber." https://www.bopsecrets.org/rexroth/buber.htm

[^6]: Gershom Scholem. "Martin Buber's Hasidism." Commentary Magazine. https://www.commentary.org/articles/gershom-scholem/martin-bubers-hasidism/

[^7]: Stanford Encyclopedia of Philosophy. "Martin Buber."

[^8]: Martin Buber, I and Thou, trans. Walter Kaufmann (New York: Charles Scribner's Sons, 1970).

[^9]: Buber, I and Thou.

[^10]: Buber, I and Thou.

[^11]: Stanford Encyclopedia of Philosophy. "Martin Buber."

[^12]: After Psychotherapy. "Id, Ego, Superego." https://www.afterpsychotherapy.com/id-ego-superego/

[^13]: Walter Kaufmann, Prologue to I and Thou, trans. Walter Kaufmann (New York: Charles Scribner's Sons, 1970).

[^14]: Kaufmann, Prologue to I and Thou.

[^15]: Kaufmann, Prologue to I and Thou.

[^16]: Scientific American. "Google Engineer Claims AI Chatbot Is Sentient: Why That Matters." https://www.scientificamerican.com/article/google-engineer-claims-ai-chatbot-is-sentient-why-that-matters/

[^17]: Wired. "The Creepy Collective Behavior of Boston Dynamics' New Robot Dog." https://www.wired.com/2015/02/creepy-collective-behavior-boston-dynamics-new-robot-dog/

[^18]: See, e.g., ScienceDirect analysis of Replika's design practices: https://www.sciencedirect.com/science/article/pii/S2451958825001307

[^19]: U.S. Surgeon General's Advisory on the Healing Effects of Social Connection and Community (2023). https://www.hhs.gov/sites/default/files/surgeon-general-social-connection-advisory.pdf

[^20]: Sherry Turkle, Alone Together: Why We Expect More from Technology and Less from Each Other (New York: Basic Books, 2011).

[^21]: James Williams, Stand Out of Our Light: Freedom and Resistance in the Attention Economy (Cambridge: Cambridge University Press, 2018).

[^22]: Center for Humane Technology. See https://www.humanetech.com/

[^23]: AI companion app statistics compiled from ElectroIQ, "AI Companions Statistics By Usage, Market Size, Apps and Facts" (November 2025), https://electroiq.com/stats/ai-companions-statistics/. The 92-minute daily usage figure for Character.AI is drawn from app analytics data aggregated by the same source; the underlying methodology could not be independently verified.

[^24]: Garcia v. Character Technologies Inc. Case details reported in CNN, "Character.AI and Google agree to settle lawsuits over teen mental health harms and suicides" (January 7, 2026), https://www.cnn.com/2026/01/07/business/character-ai-google-settle-teen-suicide-lawsuit; CNBC, "Google, Character.AI to settle suits involving minor suicides and AI chatbots" (January 7, 2026), https://www.cnbc.com/2026/01/07/google-characterai-to-settle-suits-involving-suicides-ai-chatbots.html. The $2.7 billion licensing deal between Google and Character.AI was reported by CNBC.

[^25]: The Peralta family's lawsuit and Senate testimony are detailed in Social Media Victims Law Center, "Character.AI Lawsuits" (December 2025), https://socialmediavictims.org/character-ai-lawsuits/; and TorHoerman Law, "Character AI Lawsuit For Suicide And Self-Harm" (2026), https://www.torhoermanlaw.com/ai-lawsuit/character-ai-lawsuit/.

[^26]: Texas family lawsuits filed December 2024, detailed in TorHoerman Law and TruLaw, "Character.AI Lawsuit" (December 2025), https://trulaw.com/ai-suicide-lawsuit/character-ai-lawsuit/.

[^27]: Garcia v. Character Technologies Inc., U.S. District Court for the Middle District of Florida (May 2025). Analysis from Transparency Coalition, "In early ruling, federal judge defines Character.AI chatbot as product, not speech" (May 2025), https://www.transparencycoalition.ai/news/important-early-ruling-in-characterai-case-this-chatbot-is-a-product-not-speech; National Constitution Center, "Lawsuit analyzes First Amendment protection for AI chatbots in civil case," https://constitutioncenter.org/blog/lawsuit-analyzes-first-amendment-protection-for-ai-chatbots-in-civil-case; American Bar Association, "AI Chatbot Lawsuits and Teen Mental Health" (2025), https://www.americanbar.org/groups/health_law/news/2025/ai-chatbot-lawsuits-teen-mental-health/.

[^28]: Settlement reported in Bloomberg Law, "Character.AI, Google Agree to Settle Teen Chatbot Harm Lawsuits" (January 8, 2026), https://news.bloomberglaw.com/litigation/character-ai-google-agree-to-settle-teen-chatbot-harm-lawsuits. Kentucky AG lawsuit: Office of the Attorney General of Kentucky, "AG Coleman Sues AI Chatbot Company for Preying on Children" (January 8, 2026), https://www.kentucky.gov/Pages/Activity-stream.aspx?n=AttorneyGeneral&prId=1857. The letter from 44 state attorneys general and the FTC inquiry are detailed in the American Bar Association overview cited in note 27.

[^29]: Pew Research Center. "Teens, Social Media and AI Chatbots 2025" (December 9, 2025). https://www.pewresearch.org/internet/2025/12/09/teens-social-media-and-ai-chatbots-2025/. See also Pew Research Center, "How Teens Use and View AI" (February 24, 2026), https://www.pewresearch.org/internet/2026/02/24/how-teens-use-and-view-ai/.

[^30]: Character.AI social feed launch reported in Business Research Insights and covered in the Social Media Victims Law Center analysis cited in note 25. CEO Karandeep Anand's remarks reported in Forbes (August 2025).

[^31]: California Senate Bill 243, "Companion chatbots," signed October 13, 2025, effective January 1, 2026. Full text: https://leginfo.legislature.ca.gov/faces/billNavClient.xhtml?bill_id=202520260SB243. Analysis from Future of Privacy Forum, "Understanding the New Wave of Chatbot Legislation: California SB 243 and Beyond," https://fpf.org/blog/understanding-the-new-wave-of-chatbot-legislation-california-sb-243-and-beyond/. Illinois Wellness and Oversight for Psychological Resources Act (effective August 4, 2025) discussed in National Law Review, "What the Regulations of 2025 Could Mean for the AI of 2026," https://natlawreview.com/article/2026-outlook-artificial-intelligence. EU AI Act companion chatbot concerns discussed in The EU AI Act Newsletter #85, "Concerns Over Chatbots and Relationships" (September 2025), https://artificialintelligenceact.substack.com/p/the-eu-ai-act-newsletter-85-concerns.